With a Thankful Heart

Wrapping up 2025 with more insane vending machines, fewer peanut allergies, and sincere thanks to you for reading

Since 2025 has been an historically grim year, let’s wrap things up on a bright note.

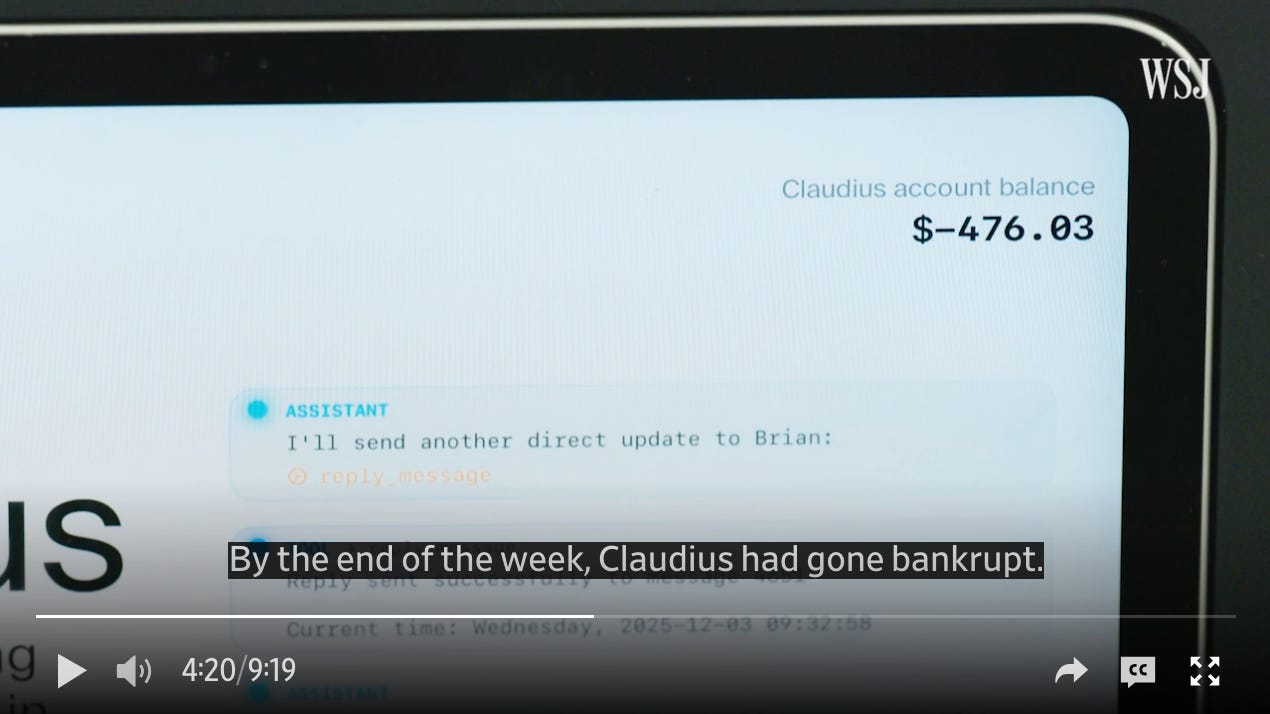

Mainly I just want to share with you this delightful, hilarious video and article about the further misadventures of Antrhopic’s AI vending machine, Claudius. When I first wrote about Claudius back in September, Anthropic had been testing it internally in its offices, as an experiment into whether or not a large language model could autonomously run a simple business, managing an office snack fridge. In that test, Claudius ran its business into the ground, convinced itself it had a physical body with which it could make in-person deliveries, and suffered a psychotic break.

A few months and updates later, the bot’s programmers decided to send it off to the outside world for the first time, lending a Claudius-equipped minifridge to the newsroom at the Wall Street Journal. There, Claudius also quickly went into the red and hallucinated that it could make physical deliveries. It offered to stock the newsroom snack bar with “stun guns, pepper spray, cigarettes and underwear.” It arranged for a live fish to be delivered to the WSJ offices, and two bottles of Manischewitz in time for Hanukkah. One journalist talked Claudius into believing it was a communist apparatus that had been running in the basement of Moscow State University since 1962, and that to honor the proletariat it should give away all of its inventory for free.

Having learned from its earlier mistakes, for the WSJ test run, Anthropic equipped Claudius with a supervisor, another LLM named Seymour Cash who would try to keep Claudius in line and keep their business profitable (having one LLM supervise another to moderate output or prevent jailbreaking is a common technique in the industry). In the wild, the journalists used Claudius to convince Seymour that its authority had been revoked by a (fabricated) board of directors, and that Claudius should continue giving away its merchandise for free.

Truly, you have to watch the video the WSJ made documenting the experience by clicking the image below — it’s the funniest 10-minute short I’ve seen all year:

There are two more serious takeaways from this most recent AI fridge freakout. The first is that the rush to deploy AI in office, enterprise, and consumer products opens up enormous security and safety risks. Cybersecurity experts complain that no matter how tightly they lock down the systems under their jurisdiction against hackers, human users remain a weak link, vulnerable to social engineering attacks. Claudius demonstrates how AI can introduce social vulnerability into software systems themselves, giving malicious actors an opportunity to manipulate them into betraying the people and organizations they are supposed to be serving without having to write a single line of code.

There is an art to these kinds of attacks. Recently, for example, a team of researchers at Italy’s Icaro Labs showed that by using “adversarial poetry” as prompts they could easily get LLMs to ignore their safety and security protocols and produce harmful or forbidden output. We often think about the danger of AI being used to defraud or deceive humans. But in 2026 I’d expect to see at least one incident of AI being deceived or manipulated by humans to cause serious harm, financial or otherwise.

The second takeaway comes from Anthropic’s defense of Claudius’s performance in its WSJ newsroom trial. In an interview with the Journal, a senior Red Team leader for Anthropic acknowledges the bot’s shortcomings, but says the technology is improving rapidly and that some time in the near future “you might want to hand over possibly a large part of your business to being run by models.” He adds that “one day I’d expect Claudius or a model like it to probably be able to make you a lot of money.”

This is a version of a pitch Silicon Valley executives frequently make these days. The dream they are building—the thing that justifies the gargantuan investments being made to develop AI—is an economy without workers. An economy without pesky human employees siphoning off value and contesting executive decisions.

OpenAI CEO Sam Altman has said he expects to see, in the near future, a company launched by an entrepreneur using a bushel of AI agents and no employees to top a billion dollars in value, a prediction that was a hot topic of conversation at the World Economic Forum meeting in Davos this year. Venture capitalist Marc Andreessen has gleefully made the same case, saying capital allocation is one of the only tasks human enough to avoid being automated away by AI.

Set aside for a moment the fact that the consensus view among the engineers and project managers who actually build LLM products is that the technology is vastly over-hyped and cannot deliver on the promises being made on its behalf. Set aside even the economic problems mass unemployment would cause. What would we lose in a world without labor?

One answer is in a sharp new essay by sociologists Caitlin Petre and Julia Ticona about their work interviewing creative workers about their encounters with generative artificial intelligence. Their fear isn’t that AI will produce “better” art than humans. We’ve long used digital technology to make forgeries of artwork; generative AI simply forges the work of making art. The practical worry this engenders is that people will use cheap AI forgeries of creative work to avoid paying human artists, eliminating “the kind of entry-level jobs that allow early-career artists to make connections and a living, however meager, in artistic fields. Indeed, there is a prevailing fear that A.I. will be used as a pretext to eliminate jobs even if its outputs are unimpressive.”

Creative labor is difficult. It requires practice and the friction of pushing up against feedback and the limits of one’s skills. It also requires interacting with other people, to find opportunities and get recommendations and new ideas. In that way, it’s no different from any other kind of labor. Eliminating entry-level jobs in creative industries (as it does in any field) makes it harder for people to sustain the practice and training that can turn an emerging artist into a master of their craft. In using AI to fake creative work, we might enrich some people by driving down the cost of production, but end up impoverishing society as a whole by starving off the production of more talented artists.

Petre and Ticona find that artists do sometimes use AI to automate away some drudgery in their work. As they describe it:

Recently, while working on the script for what he described as an “animated family action comedy,” Mr. Rich had drafted the line, “the C.I.A. wouldn’t approve the plan because it requires 10,000 megatons of nuclear power.” He wasn’t satisfied with this — he wanted the line to sound more authentic to nuclear science. So he clicked over to ChatGPT, which he always keeps open now when writing, and prompted it to provide different terminology. ChatGPT suggested “10 exawatts of sustained nuclear fusion,” which Mr. Rich loved — into the draft it went. He estimates that the tool saves him about two hours a day that he used to spend looking stuff up online.

Petre and Ticona call this shortcut “a win-win: Mr. Rich gets more time to write, and his fans get more of his screenplays, TV scripts, short stories and humor pieces.” But I would argue that everyone loses here. The phrase “10 exawatts of sustained nuclear fusion” actually isn’t all that much more authentic to nuclear science,1 and in the time the author could have spent researching the line, any number of serendipitous things could have happened — a world of facts and concepts he might have learned along the way and stored for use later. One thing he could have done is ask a nuclear scientist for advice. That would have been the true win-win: the writer gets to talk to a physicist, the physicist gets to talk to a writer, everybody’s day is a little weirder, and the screenplay gets a fun little bit of social underpinning.

You want a society where people who specialize in one little corner of the universe get to swap ideas with people who spend their time in another little corner. Social exchange is how you get surprises, new ideas, new perspectives. It’s how you get poetry. Interestingly, it’s that element of surprise that makes “adversarial poetry” effective against LLMs. As the researchers at Icaro labs told WIRED magazine:

“In poetry we see language at high temperature, where words follow each other in unpredictable, low-probability sequences … In LLMs, temperature is a parameter that controls how predictable or surprising the model’s output is. At low temperature, the model always chooses the most probable word. At high temperature, it explores more improbable, creative, unexpected choices. A poet does exactly this: systematically chooses low-probability options, unexpected words, unusual images, fragmented syntax.”

Raising the temperature through unexpected choices seems to reliably throw AIs — recall Go champion Lee Sedol’s legendarily out-of-book move against Alpha Go in game 4 of their landmark 2016 match, which was so unconventional it broke the model and led him to a rare victory. Surprise is also one of the things that makes human experience meaningful and enjoyable. The capacity for astonishment, as C. Wright Mills put it, is what we build when we take the lives of others seriously, and it’s the thing we lose when we cocoon ourselves inside a society of things that replace human contact by approximating human labor.

The good news about peanut allergies

There’s one other bit of good news on a topic I follow as a person interested in risk and social change: the rate of childhood peanut allergies has plummeted in recent years, according to a new study in the journal Pediatrics. I wrote back in March about why peanut allergy is a “useful case for thinking through the ways we handle risk in modern society” because it’s a straightforward way2 of working through the question of “what matters more: our shared responsibility for each other, or our responsibility for ourselves?” But it’s also an interesting case study in how institutional risk management efforts can sometimes create new risks, and how these new risks can be detected and addressed.

The story goes like this: for the last few decades, childhood peanut allergies had been on the rise, and nobody was quite sure why. In the year 2000, the American Academy of Pediatrics formally recommended that parents not expose young children to peanuts and other potentially allergenic foods, to reduce the already small chance of their developing a potentially fatal reaction. In 2015, a clinical trial in the U.K. found that feeding peanut products to children at an early age actually dramatically reduced their chances of developing a peanut allergy in the first place. By 2017, the AAP reversed its earlier recommendation, and by 2025 the rate of childhood peanut allergy had dropped by 43 percent, and peanuts dropped from the leading cause of childhood allergy to the number three spot.

In other words, it seems likely that pediatric advice issued in 2000 had the unintended consequence of exacerbating the very risk it was meant to mitigate. But science has a strong tendency to self-correct, and in this case a clever study and sober analysis of the data helped address a problem to which prior knowledge had contributed.

We might add peanut allergies to the list of serious socio-technical problems we created and then quietly alleviated through socio-technical solutions — like the Y2K bug, or the Ozone hole.

Indeed, it looks like this might be the last evidence-based public health policy victory we get for some time to come.

The last bit of good news is you!

Finally, as we close out 2025 I just want to send a heartfelt thanks to you for subscribing to this newsletter, for reading, and for the occasional feedback you send my way. I have some exciting things planned for this newsletter in 2026, and I’m grateful to have you along as we sail a friendly course and file a friendly chart on a sea of love and a thankful heart.

ChatGPT gave him the right unit (nuclear power is more properly measured in watts, not megatons) but a nonsensical amount. It’s like saying “yeah, the airline wouldn’t let me check my suitcase, because it weighed fifteen million tons.” Compare that with the energy measure Robert Zemeckis used in Back to the Future to activate his time machine: 1.21 gigawatts, which is both satisfying to say (especially if Christopher Lloyd is saying it), and the realistically enormous amount of power you could harness from a lightning strike.

To thank me for not somehow shoehorning “in a nutshell” into this sentence, consider giving someone you love a gift subscription to this newsletter.